Editor’s note

All characters and events depicted in this post are entirely fictitious. Any similarity to actual events or persons, living or dead, is purely coincidental.

Imagine you’re a researcher starting a new project where you use eBird data to create species distribution modeling of songbirds. The only problem is you’ve never worked with eBird data and you’ve never done species distribution modeling. Admist your frantic googling you come across a researcher who did similar things to what you need. They posted their code on GitHub and allowed anyone to use it.

Excited for the lucky break, you download the code and open it up in R. But to your dismay the code is a mess. It’s incoherent, hard to read, and there’s no file describing what each function does nor where to even get started. The few managed chunks of code you could figure out give you unexpected results or non-helpful errors. Your emails to the developer have returned no responses. Frustrated, you finally give up and begin writing the code yourself. A couple months later you finally finish, you look like you just got out the trenches, overworked and past due on your deadline.

No, everything is not fine, Carl

No, everything is not fine, Carl

This is of course a hypothetical example, but in the modern age of science this hardly counts as ‘collaboration’. Two people worked on very similar things, but in different ways. There was an attempt to share code, but there was no help with how to run the code. With the rise of open-source science and repositories like GitHub, SourceForge, RForge, etc., there’s an increasing number of scientists letting the world see their code. In many ways these are good things. Open-source code allows outside users to scrutinize your code, allowing them to find and help fix mistakes. Sharing tools allows researchers to spend less time unnecessarily writing code and more time doing actual research. However, badly written and documented code can be more of a hindrance than a help.

So let’s say you want to turn around and publish your code on an open repository. Or say you’ve been reading this and you’re realizing you’ve been that unhelpful code-sharer from our first story. What can you do to become a more responsible programmer who writes helpful code? Well, that’s where rOpenSci can help.

rOpenSci

![]()

rOpenSci is a non-profit initiative founded in 2011 with the mission to “foster a culture that values open and reproducible researching using shared data and reusable software”. They accomplish these goals by focusing on two things: 1) Creating best practice standards for the R language and 2) Peer-reviewing packages that adhere to these standards. The staff consists of a handful of dedicated experts but the main driving force is the army of volunteer reviewers, editors, and collaborators who help with rOpenSci.

Review Process

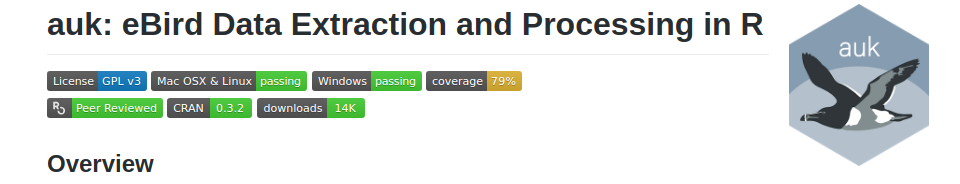

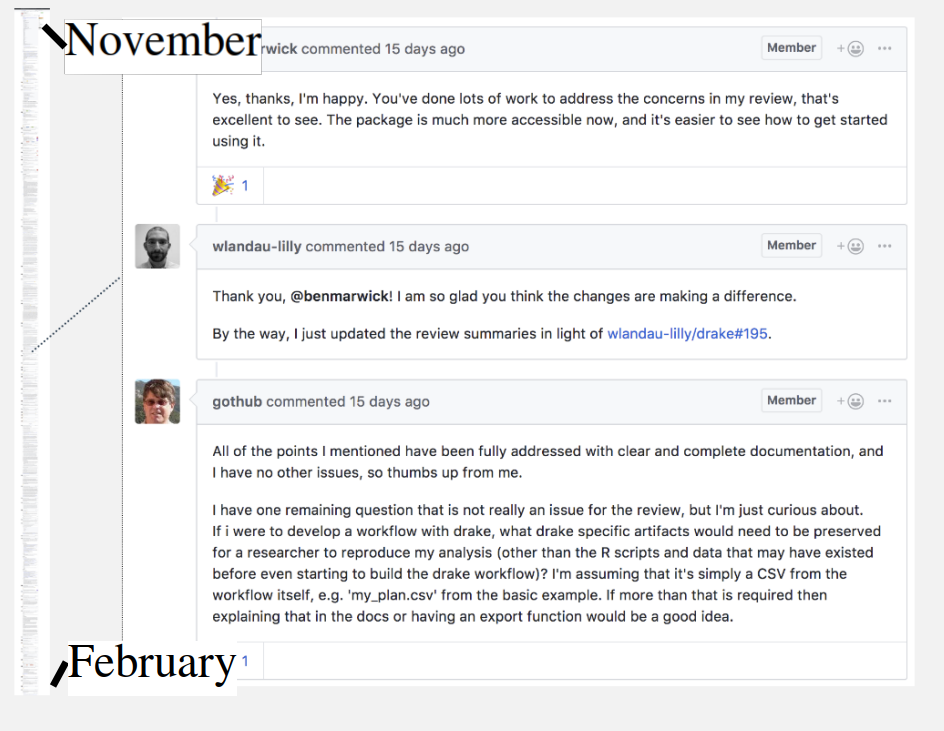

rOpenSci’s main driver for change is their package peer-review system. Packages are reviewed by other developers to assure they are easy to use, well documented, and well coded. Packages that successfully pass this system are given the rOpenSci certified badge which developers can link or attach to their code repository site. This badge assures users that a package adheres to certain standards. rOpenSci also hosts these packages on their website and are featured on their blog, giving many packages a much needed boost in visibility. The review process is unique because of it’s open forum process. Users and reviewers discuss the ongoing evolution of the package in a public forum on GitHub. The process acts more like a discussion than a review, and helps teach developers to think about readability and usefulness of their package.

Standards and guidelines

So what are these standards and guidelines, and how can we use them to become better programmers? rOpenSci literally wrote a book on Best Practices for R. These concepts were taken heavily from Mozilla Firefox’s best practices guide, and Hadley Wickham’s R Package book. The guidelines are continuously discussed on their forums allowing the guidelines to evolve through time, making sure they are up to date wit the latest software issues.

Defining your scope

- An important part in the submission process is having the user define if a package is appropriate and useful to the R community. Per their development policies: “rOpenSci aims to support packages that enable reproducible research and managing the data lifecycle for scientists.” Regardless if a package is within their scope, a developer should define their aims and scope of their package earlier on the packages life cycle. Developers with a defined aim and scope will design better packages that are clear and more functional to users.

Documentation: README file and vignettes

- Building a great package may feel like the majority of the work, but writing helpful documentation is arguably more important if you want others to actually use your package. Well-written documentation helps guide users from start of analysis to the end. The use of vignettes, as opposed to manuals, improve documentation by shifting focus from describing a function’s arguments to describing the how and why of each function. This helps the programmer think clearly about the contents of a function and if it’s easy to use. Not only is a frustrated user less likely to use your package, but it unnecessarily slows down the scientific process.

A package is only as useful as its documentation

A package is only as useful as its documentation

Readable and concise

- Programmers often write code without thinking of how easy the code is to follow. rOpenSci values readable code and pushes heavily on developers to adhere to Hadley Wickham’s style guide when at all possible. These tweaks help users know what’s going on in the code and help to modify code when necessary. They include: naming functions in an understandable manner, maintain correct spacing and ‘grammar’, and keeping each line of code to 80 characters total.

Reproducible

- The concept of reproducibility is that given the same data the code will perform as expected every time, and given new data your function should return a predictable output. Code should perform as expected on different platforms and under unique data inputs. Code should be concise and not contain repeated code. This keeps development time down and allows for quicker edits in code.

Continuous integration and code testing

- Unit testing is an accepted standard in software development but underutilized in most R programmers toolkit. Unit Testing is the idea that when there is a problem in your code (even in the beginning!) you build a test for that failure. When you change your code, you run those tests to make sure that error has not been reintroduced into your code. By continuously testing for these fringe issues you make sure your code always performs as expected even when changed.

- Building on this, rOpenSci pushes the idea that at least 75% of your code should be ‘tested’. To be included in this calculation, a line of code has to be run during at least one test. So even fringe ‘if’ statements just meant to catch a rare error, need a test to make sure the output of that ‘if’ statement is performing correctly. The final tally of this value is called ‘code coverage’ and is another unique badge you can attach to your repository. These requirements help developers to think critically about code function and help them diagnosis problems quicker when writing new code.

- Tests can be created easily with the testthat package. testthat allows you to quickly make tests for functions where you tell it whether you expect a certain value to be returned, error, or graph from a function and it tells you whether the test passes (outputs match expectation) or fails (something unexpected was returned).

Badges act as a report card that allow users to quickly see the reliability of a package

Badges act as a report card that allow users to quickly see the reliability of a package

Journal Submission

The advantages of certifying with rOpenSci doesn’t stop after the review process. Packages that pass the peer-review process can be fast tracked to rOpenSci’s own Journal of Open-Science Software (JOSS). This journal specializes in publishing manuscripts on scientific software, which are accepted quicker because they’ve already gone through code review. Developers can instead submit to other similar journals like the R Journal and Methods in Ecology and Evolution with the confidence that their package has already been reviewed to a high standard. Submitting your package to a journal can not only give you that frustratingly important citation credit, but can grant users another avenue for understanding how your package works. Papers can describe the science behind the methods or act more like long-form vignettes where developers describe in-depth the process and use of a piece of software.

The rOpenSci process

You can read more about the reviewing process here, but essentially:

- A developer initiates an official request on the GitHub page

- Editor determines if package fits into rOpenSci’s scope and that it passes baseline quality and completeness tests

- Two reviewers are assigned to the package and review the package based on ‘usability, quality, style and documentation’. Reviews are submitted to the GitHub page

- Developers modify package until reviewers are satisfied with changes (likely multiple rounds of reviews)

- Packages finally pass (hurray!) and are included on the official list of packages

- The package is included in its own blog post and shared on the rOpenSci Twitter account

- Developers can now submit immediately to JOSS or another journal.

The scientist-programmer hybrid

Many institutions now expect applicants to have high tech literacy when applying for post-graduate positions, and this growing pool of hybrid scientist-programmer underscores the importance of teaching everyone to write better code. In our recent study on Movement Ecology Packages in R, we found that packages routinely lacked adequate vignettes or supplementary papers on the package. Many of these packages remained unclear about how to correctly use the functions for analysis. And in our follow-up user survey on these packages, we found that even highly used packages lacked adequate documentation and were routinely considered to have only ‘basic’ documentation. Clearly programmers of all skill levels are failing to consider usability and readability when publishing their code.

Increasingly scientists are finding themselves in two worlds expected to be both a scientist and a programmer. They’re required to not only be up to date with the latest science findings but the latest software and standards as well. An overwhelming position to be sure. But it’s important to remember that we as science-programmer hybrids don’t have to reinvent the wheel. Software engineers have decades of experience dealing with similar problems that scientists are tackling now. An interesting example is Joel Spolskys blog on being a software developer. Joel started Stack Overflow in 2008, and has written extensively about programming. Back in 2000 he wrote a post entitled 12 steps to better code. In his post he mentions similar things modern scientist-programmers are thinking about now including:

- emphasis on version control

- usability of code (i.e the ‘Hallway Test’ where you take someone walking by in the hallway and see if they can successfully use your software)

- unit testing (particularly nightly builds which is related to continuous integration)

- making a productive work environment for coders (i.e the importance of quiet environment that are free of distractions, in particular his discussion on how coders are often ‘in the zone’.)

And we shouldn’t be afraid to borrow practices from the software industry just because we’re scientists first. At the end of the day no matter your skill level, these kinds of lessons can help you and the entire science community!

Luckily for us, the last five years has seen an increase in visibility on these issues. There are dedicated groups like rOpenSci and The Carpentries (formerly Software and Data Carpentry) who are helping to teach scientists to be more effective programmers. This can only be a good thing for all of us. So really there’s no reason not to be a better programmer today!